Three-dimensional (3D) modeling of objects plays an important role in quality assurance, re-engineering of an object, and mapping of interior design for quality measurements. Although using laser scanner is the most common method for 3D point cloud, camera-based methods are also introduced in this field.

A 3D camera not only can capture 2D information, but also has 3D information required. Thus, the output of a 3D camera is usually in the combination of 3D point cloud and a 2D image which is crucial in certain demands.

The process of capturing 3D data is complex. While 2D cameras are inexpensive and widespread, specialized hardware setups are typically needed for 3D sensing.

Types:

3D laser profiler

3D structured light camera.

3D ToF camera

Binocular stereo camera

RGB-D cameras ( as they give depth info along with RGB color values – these cameras normally use Stereo Vision or Time of Flight methods to achieve depth info)

In 3D LASER profilers, Laser measurement systems include a CMOS camera and a laser light source. The operating principle is based on a laser beam projected on the surface of the target material under measurement. This beam or part of it is then reflected onto a detector, shifting its position in the receiver as its location varies on the object surface.

The information collected is then used to measure height, width, area, slope, and other complex surface features. These laser profiling systems are ideal for a variety of applications where non-contact measurement is critical to ensure surface and geometry quality levels with very tight tolerances in industrial operating conditions. 3D Laser Profiling is based on Triangulation and makes use of embedded FPGA processing for high-speed acquisition in industrial manufacturing processes.

Laser Triangulation is a technique able to convert data acquired in 2D images into 3D information through the projection of a laser profile on the material surface. The laser beam created by the illumination source is set at an angle with the high-speed camera sensor known as α. This angle is fixed and accurately optimized for each application based on accuracy and field of view requirements. α is then used to reconstruct the acquired image by digitizing the 2D profile and transforming it into 3D data with additional ´depth´ information.

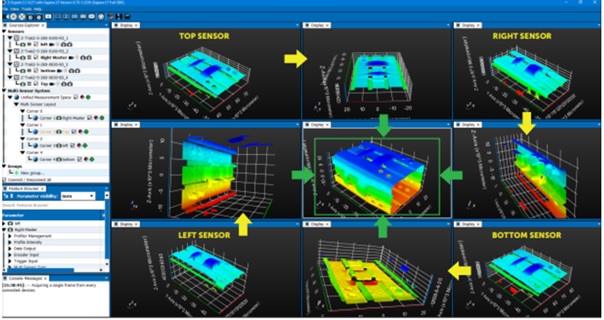

3D profiling is a 3D vision strategy that measures the alteration of a fixed laser line when projected onto an object using a camera mounted at a known offset angle. When the object passes through the laser line, thousands of profiles are generated per second, resulting in a highly accurate 3D image of the target object.

This technology is an active method of 3D vision, relying on lasers and a structured environment in addition to a machine vision camera to work effectively.

3D profiling requires motion to measure the profile of the object. This is what facilitates the ‘scan’ that the sensor captures as the target object passes through the laser line.

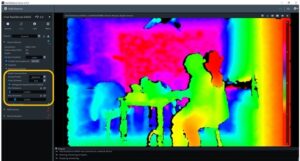

ToF (Time of Flight) camera adopts a different principle to gather 3D information, which is based on sensing the time of optical pulse in order to calculate the exact distance of objects. The detected distance can be up to dozens of meters with little disturbance of environmental light.

Stereo fixes two or more cameras at specific positions relative to one another and uses this setup to capture different images of a scene, match the corresponding pixels, and compute how each pixel’s position differs between the images to calculate its position in 3D space. This is roughly how humans perceive the world — our eyes capture two separate “images” of the world, and then our brains look at how an object’s position differs between the views from our left and right eyes to determine its 3D position. Stereo is attractive because it involves simple hardware — just two or more ordinary cameras. However, the approach isn’t great in applications where accuracy or speed matter, since using visual details to match corresponding points between the camera images is not only computationally expensive but also error-prone in environments that are textureless or visually repetitive.

RGB-D involves using a special type of camera that captures depth information (“D”) in addition to a color image (“RGB”). Specifically, it captures the same type of color image you’d get from a normal 2D camera, but, for some subset of the pixels, also tells you how far in front of the camera the object is. Internally, most RGB-D sensors work through either “structured light,” which projects an infrared pattern onto a scene and senses how that pattern has warped onto the geometric surfaces, or “time of flight,” which looks at how long projected infrared light takes to return to the camera.

3D Representations

Once you’ve captured 3D data, you need to represent it in a format that makes sense as an input to the processing pipeline you’re building. There are four main representations:

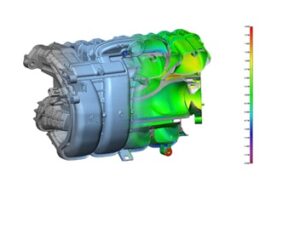

Point clouds are simply collections of points in 3D space; each point is specified by an (xyz) location, optionally along with some other attributes (like rgb color). They’re the raw form that LiDAR data is captured in, and stereo and RGB-D data (which consist of an image labeled with per-pixel depth values) are usually converted into point clouds before further processing.

3D Machine Vision captures an object’s location and shape in a format suitable for processing by a computer. An object’s surface is represented by a list of three-dimensional coordinates (X, Y, Z), referred to as a “Point Cloud.”

Point clouds represent a significant leap in the field of 3D modelling and spatial data analysis.

At their core, point clouds are collections of data points in a three-dimensional coordinate system.

Each point in the cloud possesses its own set of coordinates and, in some cases, additional information like color and intensity.

This data structure allows for the detailed and accurate representation of the shape and surface characteristics of physical objects and spaces.

The importance of point clouds lies in their versatility and precision. They are extensively used in various industries including manufacturing, in the design and quality control of products.

In CT scan, the 2D slices of Images are captured and 3D can be constructed from those based on Volume data.

3D machine vision cameras enable customers to boost performance and productivity in various applications and industries. Bin Picking, Assembly, Inspection, Measurements, Guidance etc., are applications that are used in the industries like Agriculture, Automotive, any manufacturing plant from Steel to Pharma, Commercial automated vehicles, Logistics and many more.

Note: The above contents are given only for understanding purposes. The contents are collected from different web sites and research papers believing that they are allowed to distribute or used. If any found objectionable, we can be informed at [email protected] and shall remove the same based on genuineness of the claim. This info is only for non-commercial purposes. If any concept or explanation found erroneous the same can be informed for correction.

We'll be glad to help you! Please contact our Sales Team for more information.

We'll be glad to help you! Please contact our Sales Team for more information.